After viewing my recent International Project Management Day presentation on Human-in-the-Loop (HITL) practices, an attendee asked a simple but profound question:

“This all makes sense. But how do we actually implement it?”

That question has stayed with me.

I expended a lot of energy in 2025, through blog posts and presentations, describing the limitations of generative AI (GenAI) in practical applications. But it’s one thing to agree that generative AI introduces risk. It’s another to design workflows that preserve human judgment in the presence of fluent, confident, probabilistic systems.

Now the designers of GenAI have jumped into the fray. Recently, Anthropic issued a public statement regarding the U.S. Department of Defense’s use of Claude. The statement included this line:

“…without proper oversight, fully autonomous weapons cannot be relied upon to exercise the critical judgment that our highly trained professional troops exhibit every day.”

The domain there is defense. Ours is content, strategy, and project leadership. But the principle transfers cleanly.

AI systems do not exercise judgment. Humans do.

The risk in everyday professional environments is not that GenAI will launch weapons. The risk is quieter: that we gradually outsource evaluation, synthesis, and dissent. That we begin to accept fluency as understanding. That we mistake coherence for truth.

In last month’s post, I examined the effects of cognitive shortcuts—automation bias, and confirmation bias—that can crop up in our use of GenAI. But the deeper concern isn’t simply bias. It is the potential erosion of critical thinking.

If GenAI reduces friction, we must intentionally reintroduce the right kind of friction.

In this post, I’ll explore:

- Why AI-assisted workflows can quietly weaken critical thinking

- Where Human-in-the-Loop fits along the spectrum of human–AI collaboration

- What Cognitive Forcing Functions (CFFs) are—and what recent research says about their impact

- Practical ways to design cognitive friction into professional workflows

The goal is not to slow AI adoption. It is to ensure that efficiency does not come at the expense of judgment.

The Risk to Critical Thinking

GenAI systems are designed to reduce cognitive load. That’s part of their appeal. They summarize, structure, generate, and recommend—quickly and politely.

For some quick background, the term “cognitive load” comes from the fields of cognitive psychology and instructional design and refers to the effort required by the brain’s working memory. A specific type of cognitive load, intrinsic cognitive load, is the effort associated with a specific topic.

When tools reduce effort, and thus our intrinsic cognitive load, they can also reduce our intellectual engagement with the topic, content, plan, or product.

Fluency Is Not Understanding

AI-generated text is coherent, well-structured, and often persuasive. That fluency creates an illusion of mastery.

- You read the draft. It sounds right.

- You scan the summary. It feels complete.

- You review the risk log. It appears comprehensive.

But fluency is not the same as comprehension, depth, or suitability.

When we accept the first output we receive from an AI tool without question, that is called anchoring bias, which is a special flavor of the automation bias I described in last month’s blog post. A 2024 Spanish university study confirmed this tendency in an experiment that tested people’s ability to detect and correct errors in AI-generated predictions about inmate recidivism.

The authors of this study noticed the “anchoring effect of incorrect AI support on the human decision… AI errors are very likely to compromise [a person’s] final decision even when those AI errors occur after accurate human judgment.”

In content work, anchoring bias can lead us to accept the mundane—documents that feel competent but lack depth or originality and might even contain errors. In project environments, it can result in premature convergence: “The model agrees, so we’re aligned.”

Critical thinking erodes not through neglect, but through acceptance of easy answers.

Over-Reliance as Cognitive Offloading

Automation bias is often described as over-reliance. Another way to frame it is cognitive offloading. We offload synthesis and analysis in the name of expediency.

Some offloading has become conventional. Calculators did not destroy mathematics, though some might argue their use eroded our ability to perform calculations in our heads. But when offloading replaces critical thinking—not just execution—professional capability shifts, especially in those less confident in their own skills

A study of knowledge workers conducted by Microsoft and published in April 2025 concluded:

“When considering both task- and user-specific factors, a user’s task-specific self-confidence and confidence in GenAI are predictive of whether critical thinking is enacted… higher confidence in GenAI is associated with less critical thinking, while higher self-confidence is associated with more critical thinking.”

Critical thinking in a professional capacity depends on:

- Engaging with source material

- Weighing trade-offs

- Surfacing ambiguity

- Holding competing ideas in tension

When GenAI compresses ambiguity into a neat response, it removes the friction that prompts deeper thought.

For content professionals and project leaders alike, that shift is not trivial. It can be risky—not only to the deliverable but also to our own (and our peers’) professional development.

The Spectrum of Human–AI Collaboration (And Where HITL Fits)

How can we reduce the risk? Human-in-the-Loop (HITL), a practice that involves humans in automated processes, has become an accepted way to reinsert human judgment into AI-related decision-making and, thus, theoretically improve outcomes.

But not all HITL strategies are alike.

In practice, collaboration between humans and AI exists on a spectrum.

1. AI as Tool

When we use AI as a single-instance tool, human judgment (accept or reject) remains central. Examples are spellcheck, simple grammar corrections, and formatting assistance.

2. AI as Assistant

When we use AI to assist with early work in a process, it can subtly influence the direction of our decisions. Examples are drafting content, summarizing meetings, and suggesting structures.

Some would argue that anchoring begins here.

3. AI as Advisor

When we ask AI to offer choices and strategies, it can impact the integrity of our decisions. Examples are asking AI to recommend steps in a process, prioritize risks, or propose solutions. We can be more easily swayed when the responses feel comprehensive and authoritative.

4. AI as Proxy

When human involvement in a set of actions or decisions becomes symbolic, trite, or post hoc, AI essentially acts without review. Examples are automated publishing from GenAI prompts and rolling analyses automatically into a dashboard or register. Here is where agentic AI begins.

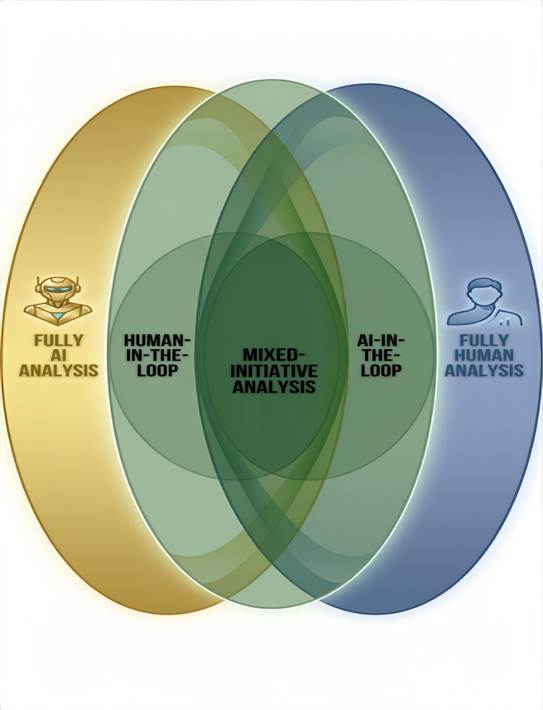

In a 2023 discussion of human-AI teaming (HAT), the United Nations Institute for Disarmament Research (UNIDIR) Dr. Mennatallah El-Assady presented the HITL spectrum as a Venn diagram (see representation in Figure 1 below). Her model suggests that some systems might be entirely automated and others entirely manual, but most lie somewhere in between. The spectrum challenges us to think about what each “agent” brings to the problem at hand.

The quality of human engagement matters most as we move from AI-as-tool (on the right side of the diagram) to AI-as-proxy (on the left side of the diagram). But inserting a human checkpoint does not necessarily preserve thinking.

If the human merely glances and approves, we have compliance theater, not critical engagement.

A Harvard research group underscores the need for “cognitive motivation” in their 2021 paper. The research group warns us that merely reading explanations of AI’s reasoning did not lead to increased “metacognitive engagement” among AI users. Instead, explainable AI (or XAI) can reinforce automation bias. In other words, transparency alone does not guarantee critical engagement.

What matters most in AI engagement, especially in HITL processes, is cognitive friction.

Which brings us to the most recent research—and my student’s question about HITL.

Cognitive Forcing Functions: Making HITL Real

Human-in-the-Loop is a structural principle. Cognitive Forcing Functions (CFFs) are how that principle becomes operational.

In the 2026 Microsoft paper “An Experimental Comparison of Cognitive Forcing Functions for Execution Plans in AI-Assisted Writing,” Ghosh et al. examine how structured interventions influence the quality of reasoning when individuals work with AI-generated plans.

Participants in the study used AI assistance to generate steps in a plan. Some were allowed to accept the AI output as is or make minor edits. Others were required to complete structured reflection steps before proceeding – a type of forced HITL that included cognitive friction.

Cognitive friction, a momentary feeling of disorientation or dissonance, is like a speed bump for your brain; it slows you down and, in this case, encourages reflection.

For this particular speed bump, the researchers asked participants to evaluate the premises of the AI’s steps before moving on. The researchers based their approach on Halpern’s framework for critical thinking, which includes the skill of argument analysis.

The results:

- Participants without such structure were significantly more likely to accept AI-generated plans at face value—even when those plans contained gaps.

- Participants who were forced to complete the reflection steps were significantly less reliant on AI, achieved higher accuracy, and did so without painfully increasing their cognitive load.

The researchers argue that, in a world with a “growing prevalence of plan-based workflows,” adding cognitive forcing functions, such as structured reflection, might be the best way to stimulate critical thinking.

The distinction is clear. Passive review asks: “Does this look fine?” Cognitive forcing asks:

- “What assumptions underlie this?”

- “What might fail?”

- “What alternatives deserve consideration?”

Without forcing functions, Human-in-the-Loop can become symbolic. With forcing functions, it becomes an epistemic discipline.

For professionals whose credibility depends on sound reasoning, that distinction matters.

Practical Ways to Implement Cognitive Friction

Extrapolating from the Microsoft team’s findings, we can all identify ways to use CFFs to introduce cognitive friction into workflows that require human critical thinking. I list some suggestions below. What others can you suggest?

Prompt-Level Forcing Functions

Introduce friction at the prompt stage by offering a best-practices checklist for the prompter:

- “List assumptions underlying this request.”

- “What evidence contradicts this approach?”

- “What risks might a skeptical stakeholder identify?”

- “What two alternative approaches are you asking the AI to compare? What are the trade-offs?”

A similar checklist could ask the human-in-the-loop to evaluate the AI’s output. These small adjustments interrupt automatic agreement.

Structural Workflow Forcing Functions

Embed friction in a workflow. For example, require:

- At least one non-AI-generated alternative before selection

- That drafting be separate from decision meetings

- A review of primary sources after generating AI summaries

- That problem- and solution-framing generated or influenced by AI be documented

If a summary replaces reading entirely, critical thinking diminishes. If a risk list replaces discussion, nuance disappears.

Friction restores deliberation.

Governance-Level Forcing Functions

At the leadership level:

- Define where AI can suggest and where humans must decide

- Log AI involvement in high-impact decisions

- Assign override authority

- Require second-pass review for public-facing or compliance materials

Not all outputs carry equal risk. High-impact decisions warrant deeper engagement.

Friction is not inefficiency. It is professional due diligence.

Conclusion: Designing for Judgment

GenAI will continue to reduce cognitive load. It will grow more fluent, more embedded, and more persuasive.

The professional question is not whether we use it. It is whether we design its use responsibly.

Human-in-the-Loop without Cognitive Forcing Functions risks becoming procedural compliance. It signals oversight without structurally preserving thought.

Research, such as that of Ghosh et al., reinforces what many experienced practitioners intuitively know: structured friction improves reasoning quality. Deliberate interruption strengthens evaluation. Critical thinking can be supported—or weakened—by design.

AI can extend capability, but it cannot assume accountability. It cannot contextualize consequences. It cannot bear responsibility for outcomes.

Those remain human obligations.

Preserving critical thinking in an automated world is not resistance to innovation. It is the stewardship of professional standards.

Image by Phong Bùi Nam from Pixabay.

Discover more from DK Consulting of Colorado

Subscribe to get the latest posts sent to your email.