Visionaries gave us products that disrupted markets, but they always had a strategy to back up the vision. Steve Jobs gave us a cellular phone that had a touchscreen keyboard because he hated mechanical ones. It also played music like Apple’s popular iPod and offered a world of apps you could download from Apple itself.

When Herb Kelleher took Southwest Airlines nationwide, he had a vision for making air travel affordable for all: he would model it after Greyhound bus lines. For better or worse, that led Southwest to implement its less expensive point-to-point flight patterns, distinct from the other airlines’ hub-and-spoke patterns.

The vision drove the strategy, and, no doubt, many project managers and communications professionals made it work.

In recent months, I have heard a subtle but important shift in how professionals talk about strategy. Increasingly, teams are not just using AI to support execution; they are asking it to suggest direction. Prompts such as “What should our strategy be?” or “What is the best approach?” crop up more and more in both project environments and content strategy discussions.

This shift raises an important question: Are we improving strategic thinking, or are we outsourcing it?

This post explores the following:

- What Strategy Really Is

- Features of Experience-Based Strategy

- Features of AI-Influenced Strategy

- Comparison of the Two Approaches

- The Blended Approach—And Its Risks

- Caveat: HITL Is Not a Panacea

- Conditions for Effective Blending

- Structuring Strategy in an AI Environment: A Model

- Practical Applications

- Strategy Still Requires Human Ownership

What Strategy Really Is

To answer that question, we need to be clear about what strategy actually is. In his widely cited talk A Plan Is Not a Strategy, Roger Martin defines strategy as:

“an integrated set of choices that positions you on a playing field of your choice… It’s a theory… about how and why you are going to win.”

This definition emphasizes that strategy is not a checklist or a plan. It is a set of interconnected decisions that “specifies a competitive [future] outcome” while:

- Accepting uncertainty

- Laying out the logic

- Avoiding overcomplication

It is, in essence, a journey guided by judgment. That distinction matters because strategy has traditionally been rooted in human experience. Leaders and practitioners draw on prior outcomes, contextual awareness, well-honed insight, and a yen for innovation to make decisions proactively. Rich Horwath calls these qualities “acumen.”

In project management, this might involve balancing stakeholder priorities or navigating organizational constraints. In content strategy, it might involve interpreting audience needs or shaping messaging in a complex environment. In both cases, strategy is less about certainty and more about informed judgment.

Features of Experience-Based Strategy

In a February 2026 Harvard Business Review article, David S. Duncan describes experience-based judgment as the “capacity to act wisely in situations where rules by themselves are insufficient.” Experienced workers recognize what matters most, weigh alternatives, and anticipate consequences.

The way those employees strategize is difficult to replicate through automated systems. It incorporates:

- Contextual awareness, including organizational culture, political realities, and stakeholder expectations

- Ethical sensitivity, particularly in decisions that affect reputation or trust

- Ability to interpret weak or ambiguous signals—those early indicators that often precede meaningful change

At the same time, experience-based strategy has well-documented limitations. Human decision-making is subject to cognitive bias, including overconfidence, anchoring, and groupthink. For more on these topics, review my January 2026 blog post, “Cognitive Bias in GenAI Use: From Groupthink to Human Mitigation.”

Research by Dan Lovallo and Olivier Sibony, published through McKinsey & Company, shows that executives frequently rely on intuitive judgment shaped by cognitive biases, often reinforcing prior assumptions rather than rigorously challenging them. These limitations do not invalidate experience, but they do suggest that human judgment alone is not always sufficient.

Features of AI-Influenced Strategy

AI systems offer a different set of capabilities that are increasingly influencing strategic work. They can identify patterns across large datasets, generate multiple scenarios, and surface correlations that may not be immediately visible to human analysts. In strategy discussions, this can translate into:

- Better real-time insights

- Improved forecasting accuracy

- Faster exploration of options and modeling

- Optimized efficiency in data collection and reporting

- More data-informed recommendations

- Enhanced collaboration on strategy decisions

A March 2025 NexStrat analysis suggests improvements of 20% to 40% in these areas, though results may vary by use case and implementation.

However, an AI-influenced strategy comes with important constraints. Large language models (LLMs) do not possess true contextual understanding of a specific organization, nor do they have accountability for outcomes. Additionally, their outputs are shaped by training data, which reflects historical patterns rather than future possibilities. As a result, AI-generated strategic suggestions often converge on what is typical rather than what is differentiated.

Still relevant also is the 2023 research by Shakked Noy and Whitney Zhang, based on a field experiment with Boston Consulting Group consultants, which found that AI can compress the drafting and synthesis parts of knowledge work, but AI’s effectiveness was limited to productivity gains. It can not replace the human work of framing the problem, making trade-offs, and deciding what “good” means in an organization—all elements of experience-based strategy.

Comparison of the Two Approaches

These differences suggest that experience-based and AI-influenced strategies operate in fundamentally different ways. Experience-based strategy is deep but narrow, shaped by specific contexts and lived knowledge. AI-influenced strategy is broad but shallow, capable of scanning wide landscapes but limited in its ability to interpret meaning within them.

Neither approach is inherently superior, but each is better suited to certain conditions.

Experience-Based Strategy Works Best When:

- Context is complex

- Stakes are high

- Ethical or reputational risks are significant

- Organizational dynamics matter

Examples include interpreting executive vision into high-level KPIs, translating strategic direction into PMO-level guidance, and developing a content strategy for a complex corporate structure.

AI-Influenced Strategy Works Best When:

- Data is abundant

- Patterns are repeatable

- Multiple scenarios are needed quickly

- Speed is a priority

Examples include product forecasting, competitive analysis, resource allocation, risk management, and user profiling.

Many strategic decisions, however, fall somewhere in between the two.

The Blended Approach—and Its Risks

Because real-world strategy making can sit between these extremes, organizations might adopt a blended approach that combines human judgment with AI-generated insights. This approach can:

- Speed research and synthesis

- Expand the range of options and scenarios considered

- Improve pattern recognition

- Retain human accountability

When complexity is broad and change is rapid, AI can assist organizations by reducing some strategy development cycles by up to 50%, according to McKinsey, as referenced in the March 2025 NexStrat blog post. The authors recommend a structured implementation approach, following a path familiar to most project managers.

However, blending introduces risks that are easy to overlook:

- False authority: AI outputs appear more credible than they are

- Strategy drift: decisions align with what AI can generate, not necessarily the full goal

- Introduction of bias: strategy can inherit the flaws inherent in the AI model

- Diluted accountability: responsibility can subtly shift away from humans

Over time, these risks can subtly reshape how strategy is formed. Some system of checks and balances is needed.

Caveat: HITL is Not a Panacea

In recent months, the concept of human-in-the-loop (HITL) has been presented as a safeguard in AI-assisted work, emphasizing the role of human oversight in reviewing and validating outputs. Simple HITL models, however, can fail to engage the critical thinking skills needed to effectively counteract AI’s weaknesses. For more on this topic, review my February 2026 blog post, “Critical Thinking and GenAI: Why Human-in-the-Loop Needs Cognitive Friction.”

In the context of strategy, the challenge of introducing AI assistance is that it can interfere with the development of the necessary acumen among less experienced workers—the kind of acumen needed to counterbalance AI’s worst tendencies. David S. Duncan tackles this paradox in his recent Harvard Business Review article. Neither simple HITL nor escalating protocols, he argues, can solve this deeper problem if they teach “that uncertainty is something you hand off.”

Effective strategic work requires space for creation, including the discomfort and uncertainty that come with it. Practitioners need opportunities to frame problems, generate options, and test assumptions without immediately deferring to AI-generated answers. If we don’t provide this space, we risk failing to fully train the next generation of leaders.

Conditions for Effective Blending

To make a blended approach effective, organizations and individuals need to be deliberate about how AI is integrated into strategy formation. This requires more than simply adding a review step; it requires structuring the interaction between human judgment and machine-generated insight.

A few conditions are particularly important:

- AI and Data Governance: Organizational principles define the boundaries for use.

- Clear role definition: AI generates inputs and explores possibilities, but humans remain accountable for decisions.

- Human-led framing: Goals, constraints, and success criteria are defined before AI is consulted.

- Cognitive friction: AI outputs are questioned, compared, and interpreted—not simply accepted.

- Transparency: AI’s role in shaping strategy is acknowledged, especially in stakeholder-facing contexts.

These practices align with guidance from the National Institute of Standards and Technology and UNESCO, both of which emphasize human oversight, accountability, and traceability in AI-enabled systems. (Note that I mention the principles from these organizations in my blog post “A New Code for Communicators: Ethics for an Automated Workplace.”)

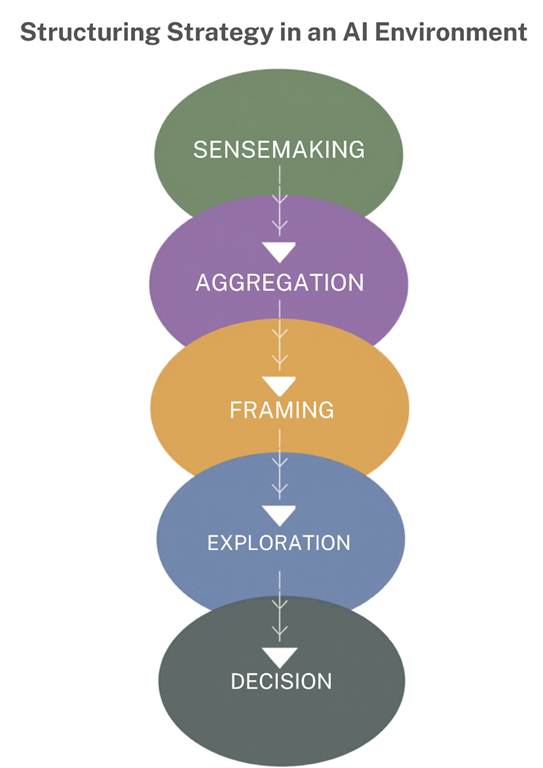

Structuring Strategy in an AI Environment: A Model

The broader implication is that strategy in an AI-influenced environment must be intentionally structured. The goal is to implement clear roles, processes, and expectations to avoid skewing both the outputs of strategy and the thinking behind it.

A blended approach usually has five layers, as the following graphic shows:

Here’s how to understand the five layers:

- Sensemaking layer: AI scans data, market signals, customer feedback, internal knowledge, and competitor activity to surface patterns and anomalies.

- Aggregation layer: AI and humans combine those signals into a summary, coherent evidence base, or decision packet.

- Framing layer: Humans decide what problems matter, what trade-offs are real, what assumptions should guide the strategy, and how to define success.

- Exploration layer: AI quickly generates scenarios, alternative moves, and “what ifs,” and tests them with the help of human interpretation.

- Decision layer: Leaders apply judgment, context, and values to choose a direction and commit resources.

This model pulls from research by Czaszar, Ketkar, and Kim that analyzes the cognitive processes involved in AI-assisted strategy development, including search, representation, aggregation, generation, and evaluation. (Their article “Artificial Intelligence and Strategic Decision-Making: Evidence from Entrepreneurs and Investors” appeared in Strategy Science in November 2024.) It aligns with current thinking that strategy is shifting from static planning to real-time, adaptive strategy, with AI and human judgment working together. Czaszar et.al. conclude that:

“As AI continues to advance, such changes have the potential to boost the quality, efficiency, heterogeneity, and availability of strategic decision-making, making the augmentation of human strategists by AI a definite possibility.”

CzAAZAR ET. AL. “Artificial Intelligence and Strategic Decision-Making: Evidence from Entrepreneurs and Investors” in Strategy Science, November 2024

Practical Applications

For project managers, this model means using AI to inform—but not determine—key decisions such as risk trade-offs, resource allocation, and stakeholder communication. AI can expand the range of scenarios considered, but human judgment must guide their selection and interpretation.

For content strategists, the same balance applies. AI can support audience analysis and content planning, but decisions about messaging, tone, and ethical positioning require human interpretation. These elements are central to trust and cannot be delegated without consequence.

Strategy Still Requires Human Ownership

Ultimately, the question is not whether AI should be used in strategy, but how it should be used. When applied thoughtfully, AI can enhance our ability to explore complex problems and challenge our assumptions. When applied uncritically, it can narrow our thinking and shift responsibility away from those who are accountable.

For both individuals and organizations, the path forward involves balancing efficiency with intentionality. Practitioners must remain actively engaged in the strategy-formation process, resisting the temptation to outsource uncertainty. Organizations, in turn, must create environments that reinforce this engagement through governance and expectations.

In an automated world, the true differentiator is not access to AI, but the ability to use it without diminishing human responsibility. Strategy, as Roger Martin reminds us, is a theory about how and why we will succeed. That theory still requires human authorship—even when it is informed by machines.

Top image generated with WordPress tools.

Discover more from DK Consulting of Colorado

Subscribe to get the latest posts sent to your email.